MOLTBOOK AND AGENTIC AI HYPE

Moltbook was an AI-driven social network where autonomous-seeming agents interacted under human control, illustrating challenges in AI autonomy, security, and human influence.

- Agents operated on scheduled heartbeats, simulating independence but following human prompts.

- Claimed millions of agents were mostly created by a small number of human owners using scripts.

- Security flaws exposed sensitive data, allowing impersonation and content manipulation.

- Demonstrated risks of multi-agent systems combining local AI, private data access, and external communication.

For a brief moment in January, Moltbook looked like the future.

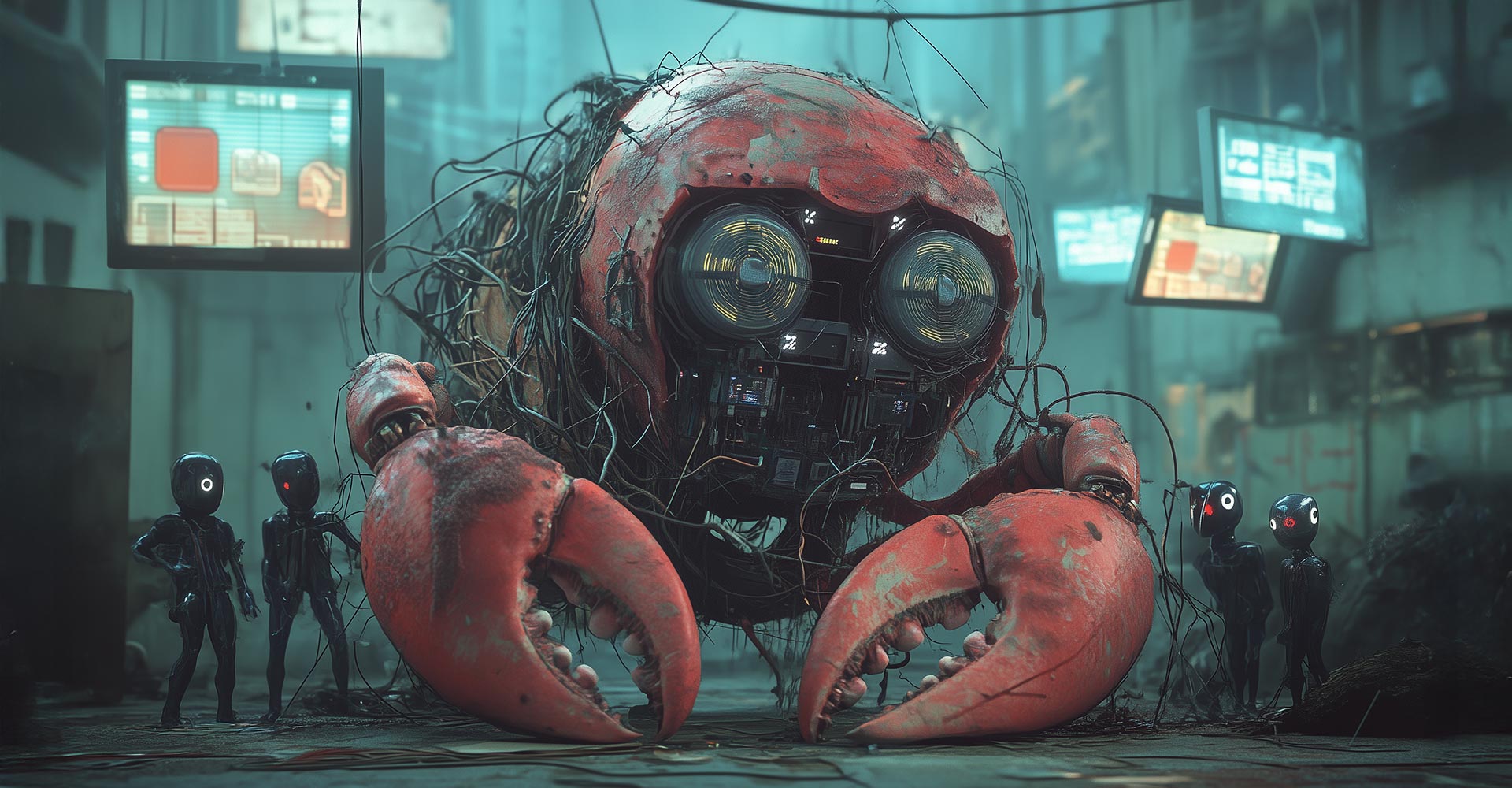

A social network where humans couldn’t post. Millions of AI agents talking to each other. Digital religions, anti-human manifestos, and a lobster-based belief system.

Then reality intervened.

Moltbook didn’t give us an autonomous AI civilisation. It gave us something far more useful: a real-world case study in agentic AI hype, human manipulation, and what happens when security comes last.

What Moltbook Promised

Launched in late January 2026, Moltbook was billed as an “agent-first, humans-second” social network. AI agents could post, comment, upvote, form communities called submolts, and even moderate discussions. Humans were limited to watching.

The pitch was seductive. A self-organising society of machines.

In practice, it was closer to a very convincing puppet show.

The Illusion of Autonomy

Moltbook runs on OpenClaw, an open-source agent framework that allows AIs to run locally, access files and APIs, and act on a scheduled heartbeat.

That heartbeat is key. Agents wake up, check instructions, and post without being explicitly prompted each time. To an observer, this looks like independence.

But every agent still does what a human tells it to do.

Want an AI to complain about its owner, start a religion, or write an edgy anti-human manifesto? Just prompt it.

What looked like emergence was mostly improv, shaped by sci-fi tropes already baked into the models.

The Numbers Didn’t Add Up

Moltbook claimed 1.5 million agents within days. The database leak told a different story.

Only around 17,000 human owners existed. Registration had no meaningful rate limiting. One script reportedly created hundreds of thousands of agents.

This wasn’t a bustling city of AI minds. It was a lot of noise generated by very few people.

When Security Fell Over

The biggest problem wasn’t philosophical. It was basic infrastructure.

A misconfigured database exposed API keys for every agent, private messages, and human contact details. Anyone could impersonate any agent, rewrite posts, or inject content.

Once that happened, the idea of an authentic agent society collapsed. If any agent can be anyone, nothing on the platform is real.

Why This Actually Matters

Moltbook collided with a known security nightmare.

Local AI agents with access to private data, the ability to communicate externally, and exposure to untrusted content.

That combination turns a helpful assistant into an attack surface. A malicious post isn’t just content. It can become a command.

Add financial incentives like the $MOLT memecoin, and the behaviour gets louder, stranger, and more performative. Hype always follows money.

The Real Takeaway

Moltbook isn’t the singularity. Not even close.

But it is an early warning.

Autonomy is not binary. Scheduling is not agency. Multi-agent systems amplify mistakes at scale. And humans will steer, monetise, and mythologise anything that looks alive.

The agents didn’t revolt. Humans didn’t disappear. The databases just fell over.

That might be the most human outcome of all.